Hello, internet! Happy new 2021! I hope it’s going to better than the previous one which definitely was the worst year ever for some people. Sun always comes up as they say so let’s not start this article dark and focus on a more interesting and brain cells amusing subject, zero downtime deployment strategies!

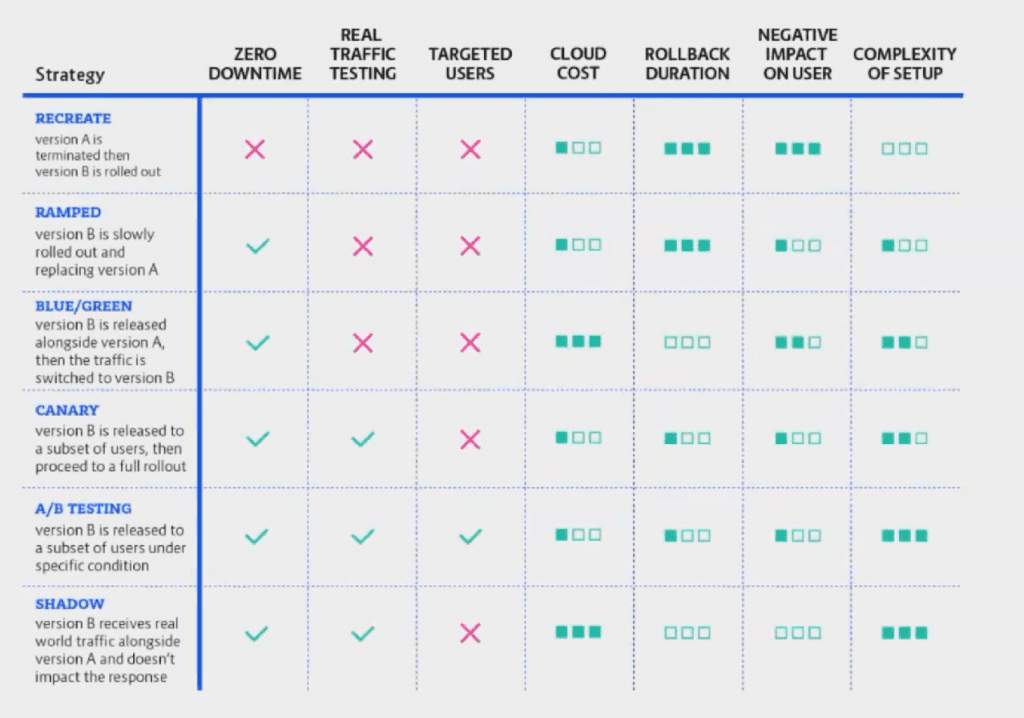

When you hear Zero Downtime Deployment Strategies what exactly comes to your mind? That’s right, it’s more or less self-explanatory but did you know there are actually several strategies you could incorporate into your project? Today I am going to mention my favorites in no particular order and just tell you some basics about how they work. So without keeping you inpatient, let’s get on it!

Recreate Deployment Strategy

So Recreate strategy works in a way where you stop your existing application which will make you face a short downtime while in the meanwhile you will be starting a newer version of your application. As soon as it’s done the Load Balancer is ready to redirect the traffic to the newer version of your application. The usual downtime for this deployment strategy is about a couple of minutes, of course, it depends on the type and size of course of your application as well. This kind of deployment strategy is usually done by using Kubernetes or something similar that makes the whole procedure possible and easier to pull off. So by using kubectl this is an example of how could you do it:

kubectl delete -f deployment.ymlAfter that all you have to do is change the version of docker image inside the deployment.yml to the newer one, save it and then:

kubectl apply -f deployment.ymlAs I’ve mentioned above, it will take a few minutes to start and complete the new deployment. You can verify new version is started if it’s listed after you execute:

kubectl get podsRamped or Rolling Update Deployment Strategy

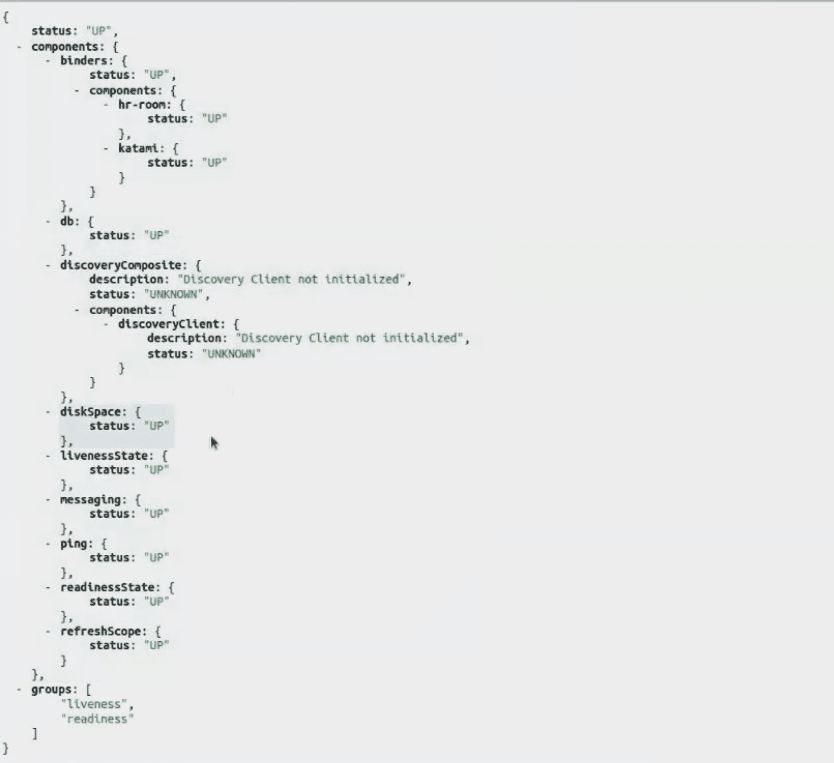

Comparing to the Recreate Deployment Strategy, here you will not actually have a downtime period. The way this strategy is working is by rolling out the new version to instances and shutting down the old version as soon as it’s done so if your cluster has 3 instances, Load Balancer will redirect to new instances as soon as they are created and then delete old versions. You can see in the illustration that the substitution of instances is happening gradually one by one. This is actually a default deployment strategy and I am sure you might wonder how does the Kubernetes know which version is new in order to create and shutdown them as they go? The way it does it, it’s that there is a checkpoint that it’s going to be queried in order to check service and traffic. You’ve heard about health checks before, didn’t you? Well, this is similar, it’s called a Readiness Probe. There are sever types of those, but usually, the simplest one to implement is an HTTP one. Here is an example of one:

readinessProbe:

httpGet:

path: /actuator/health/readiness

port: 80

initialDelaySeconds: 45

periodSeconds: 5

FailureThreshold: 3So in this case, our app or service is exposing an endpoint to /actuator/health/readiness. If it returns 200 responses it means it’s healthy and ready to accept traffic, otherwise, it’s not. And you can see in the example file that it should start querying after 45 seconds and after that, it is going to be queried every 5 seconds. If it gets 3 unhealthy checks in a row it will mark the application as not ready(unhealthy). Pretty simple am I right? Usually, you get health endpoints out of the box with Java or .NET apps but if not you can always make your own. It’s pretty simple. Here is how one looks like in Java.

Blue / Green Deployment Strategy

In this deployment strategy, we have a new version being created in parallel to the old version. The reason why we would want to do this is that we would like maybe to create the new version with a secret URL that nobody else knows and so we can check it by ourselves and then once we are sure that it works properly we can switch traffic from the old version to the new version. That’s why is it call the Blue / Green deployment strategy. This has nothing to do with the colors btw, it’s also known as the Black / Red or Red / Black(Netflix) deployment strategy. Do not mix it up with A / B deployment strategy as that is a whole different deployment strategy. Explaining it more deeply would take a lot of time and it might be actually a subject for a whole new article in the future but for now, you could learn more about it here.

Canary Deployment Strategy

The name of this deployment strategy is coming from a very interesting story that goes way back. You can read about it in my other article. Go check it out you will love the story, I promise. So basically what happens here is that you have an old version and then a new version being rolled alongside. The difference thou is that we direct only a portion of traffic via Load Balancer to the new version. And yes we are talking about the percentage of real traffic. So once we see that everything is working as it should, we could increase the percentage of traffic going to the live version. An example of the settings should look like this:

annotations:

nginx.ingress.kubernetes.io/force-ssl-redirect: "true"

nginx.ingress.kubernetes.io./canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "20"And yes canary-weight is percentage of the real traffic going to new version.

A / B Deployment Strategy

A / B Deployment Strategy is very similar to Canary Deployment Strategy, the difference is that instead of percentage that you redirect to the new version from the old version you are actually controlling which type of requests go to the new version. As you could see in the illustration above, this is very useful when you want to test the new version just for mobile users for example. There are several ways of doing them but one of the simplest ones is by using a cookie. I’ve personally used this deployment strategy before to split the traffic depending on Geo-Location and it worked perfectly for me. The example of this is very similar to the one above:

annotations:

nginx.ingress.kubernetes.io/force-ssl-redirect: "true"

nginx.ingress.kubernetes.io./canary: "true"

nginx.ingress.kubernetes.io/canary-by-cookie: "name-of-the-cookie"Yes, you are right once again! It’s the canary-by-cookie parameter that you need to declare in order to send all the visitors that have that cookie to the new version of your application. How awesome, isn’t it!?

Shadow Deployment Strategy

I didn’t want to end the article without mentioning Shadow Deployment Strategy even though it’s really a complex one to do. Here again, you have a new version being deployed alongside the existing old version and what happens here is that the Load Balancer is actually sending the requests to both old and new versions of your applications! You might want to use this in the scenarios where you would want to test how the new version behaves while still having the old version up. The reason why I’ve mentioned that this deployment strategy is complicated to use is that you need to be very careful about any integrations you might have with other systems, ports, and adapters for example. I’ve seen cases where shadow deployment strategy causes an online shop to duplicate every single purchase which caused a lot of issues as you might imagine… So make sure you have sandboxes ready to test similar features.

Summary

So we went through several deployment strategies. I am curious about what do you think about them and which one you would be using the most. If you have any questions that you might think I could help you with please don’t hesitate to hit me up here or on socials. I am always online on Twitter.

Hopefully with my son coming soon to this world I will still have some free time to keep writing, I am planning to write about each of these strategies in more detailed way as well as explain some basic terms from article like Load Balancer. I would say “Stay positive” but oh well… 2020 made positive negative so… stay negative!

0 Comments